diff --git a/docs/hub/gguf.md b/docs/hub/gguf.md

new file mode 100644

index 000000000..95cc1b555

--- /dev/null

+++ b/docs/hub/gguf.md

@@ -0,0 +1,71 @@

+# GGUF

+

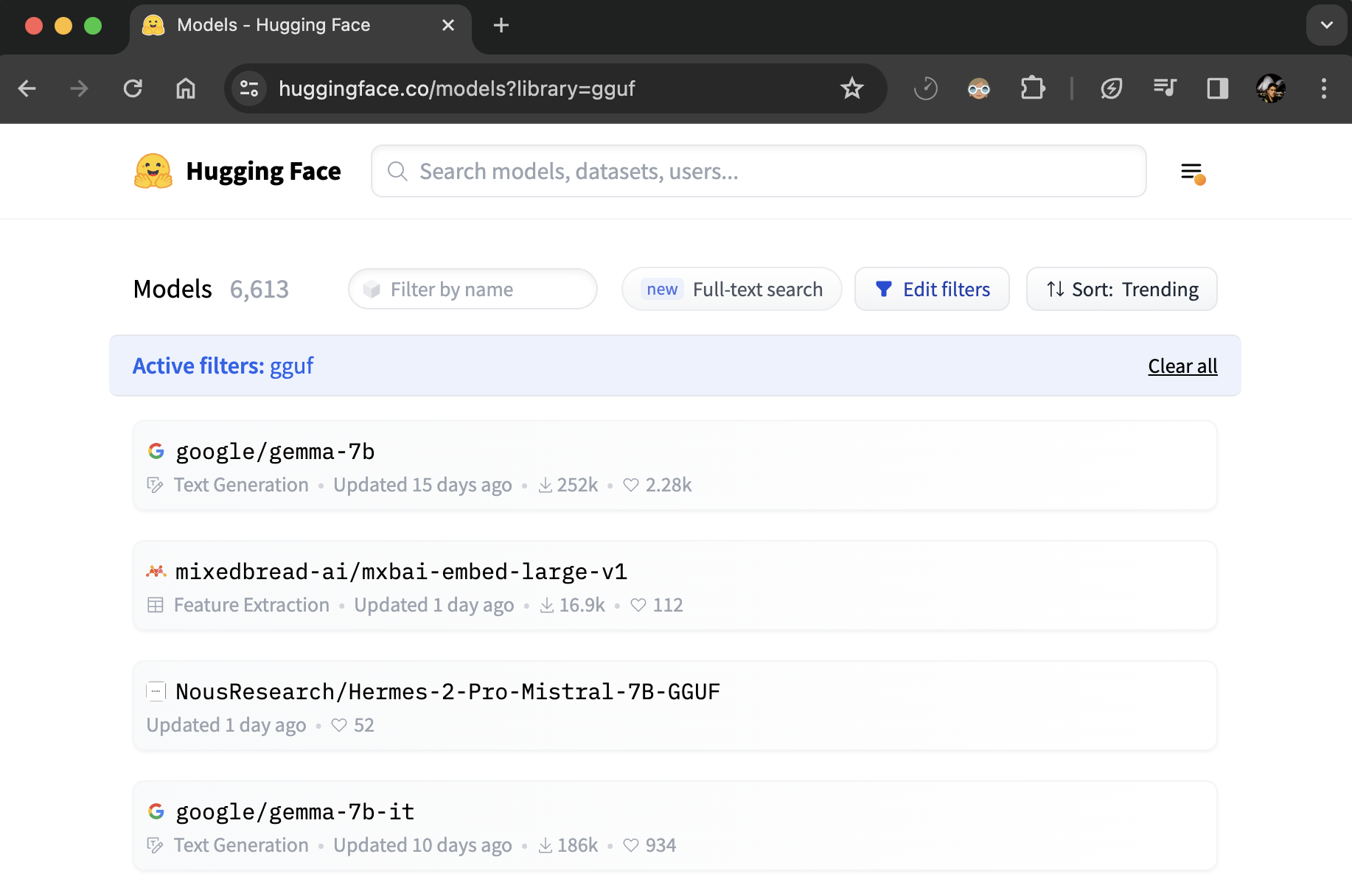

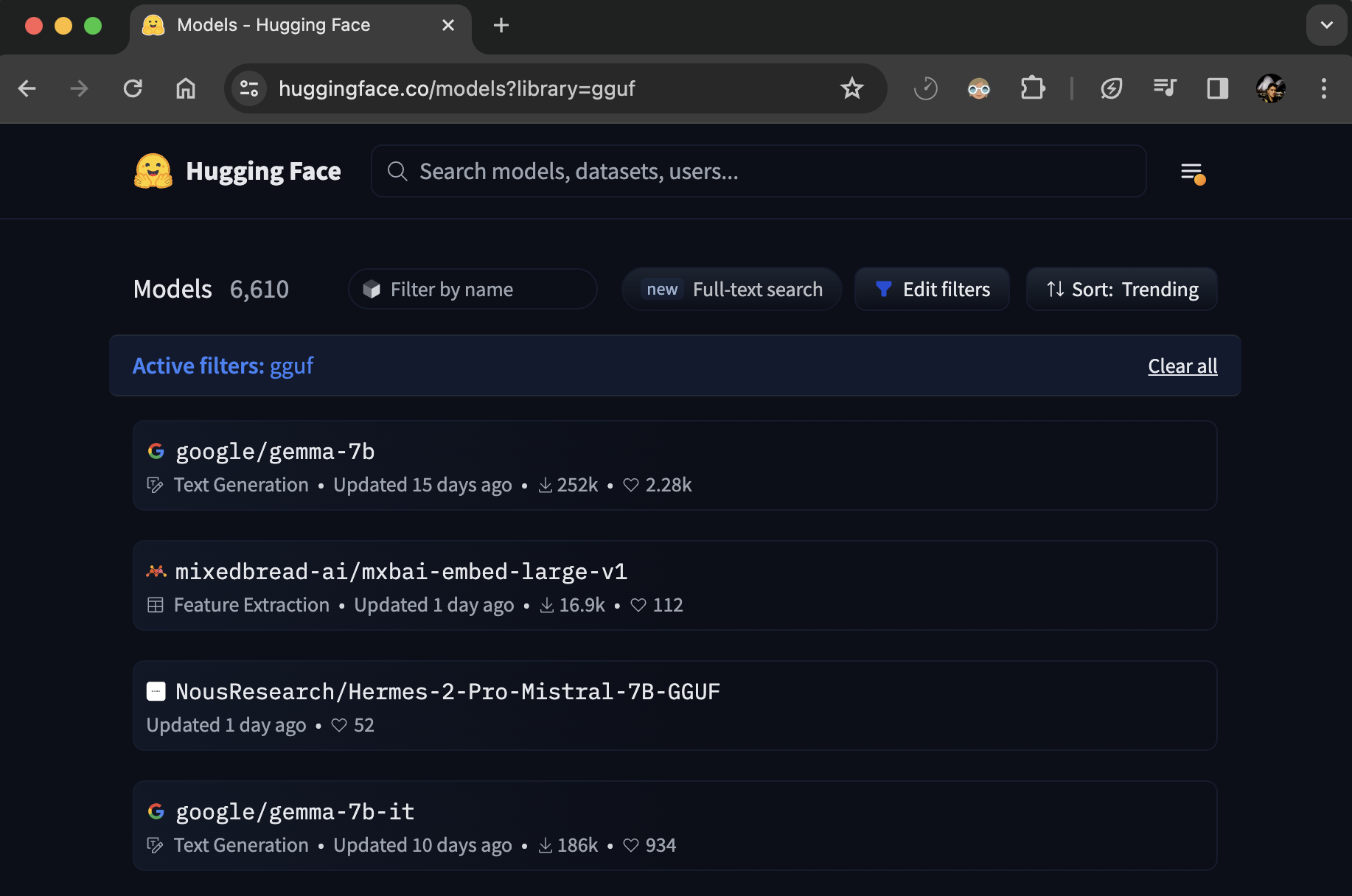

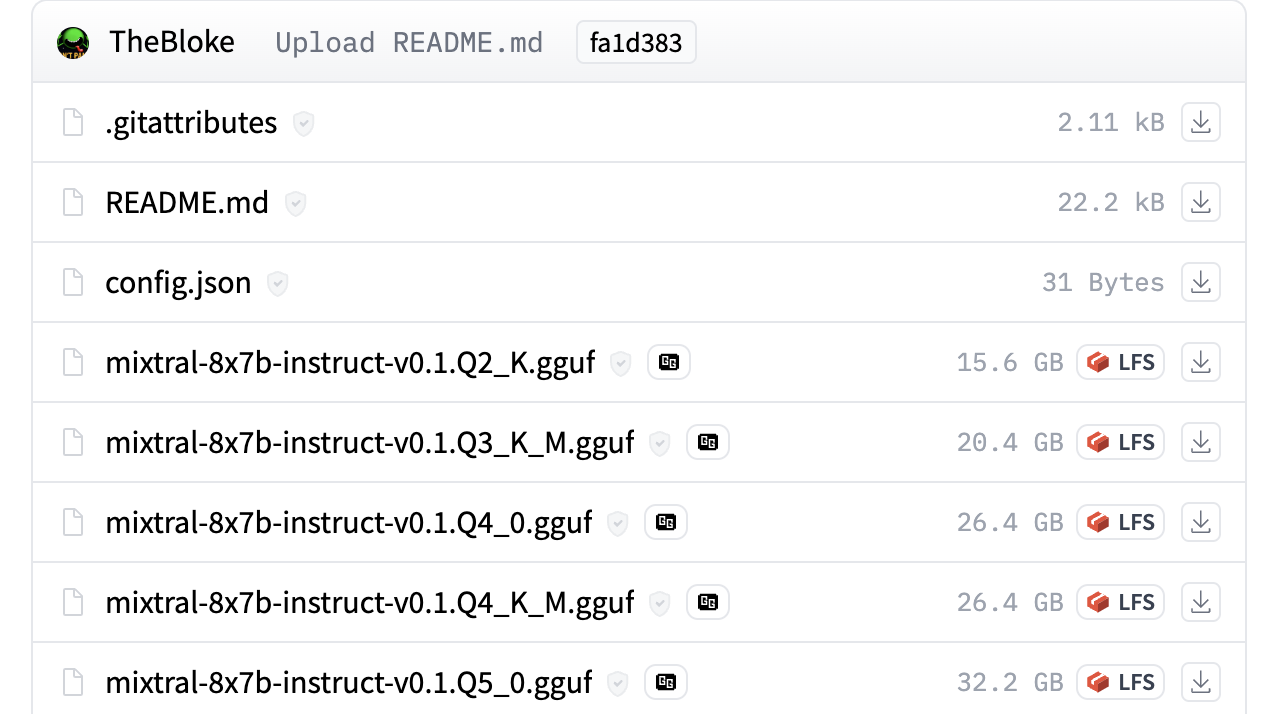

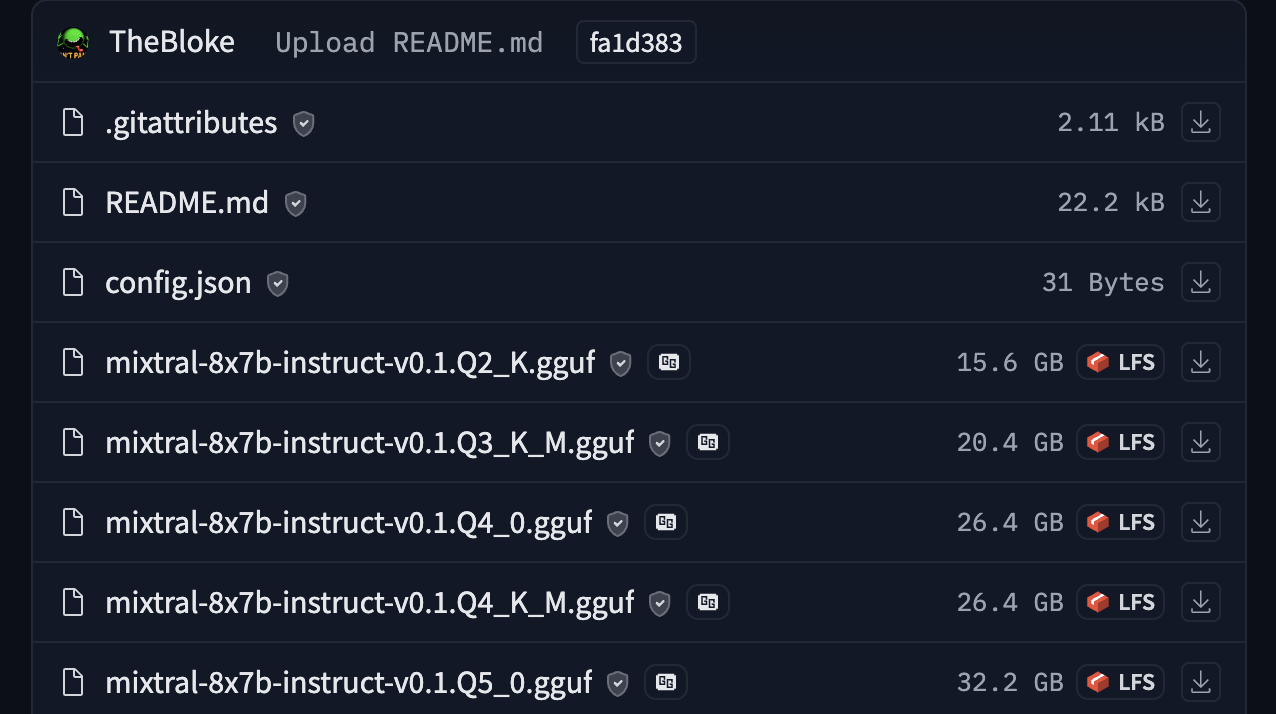

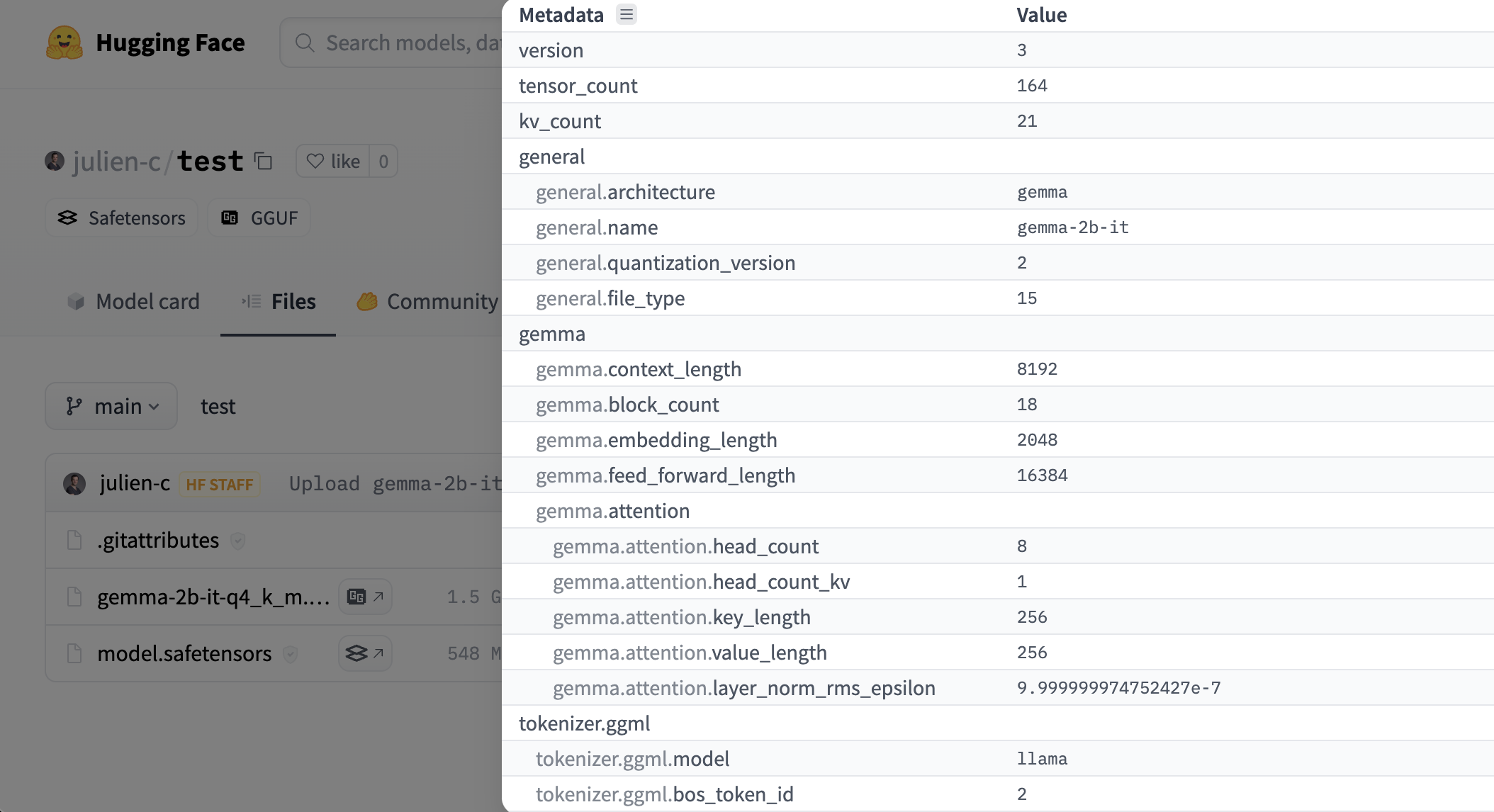

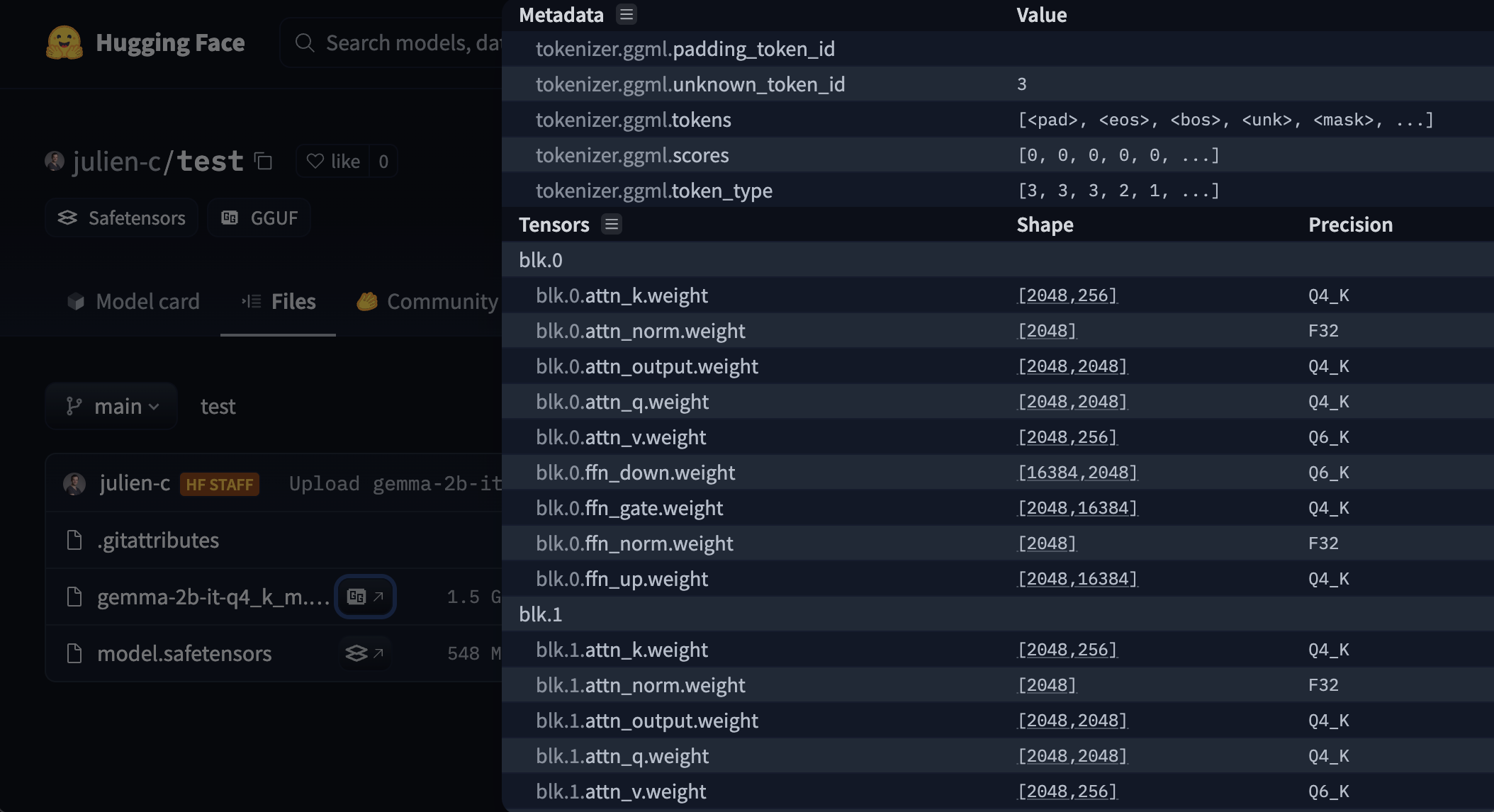

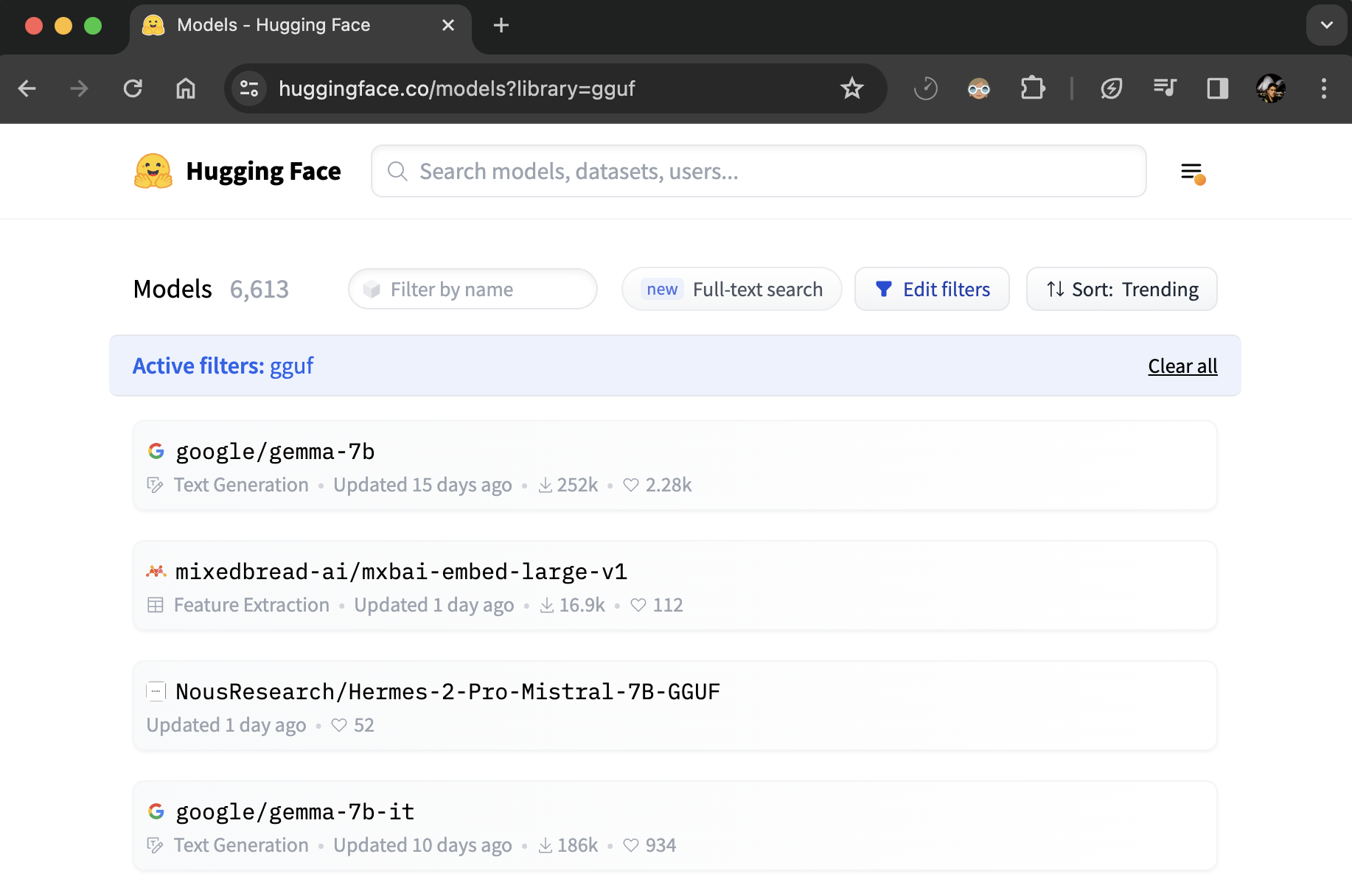

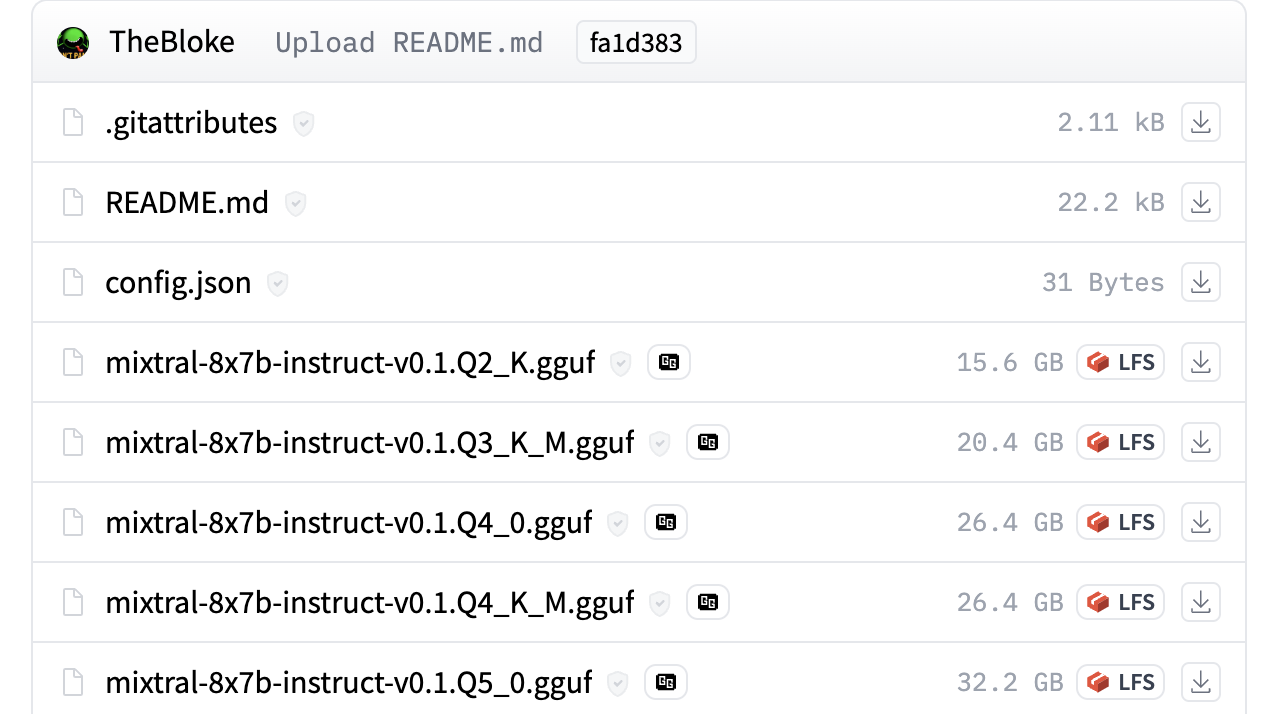

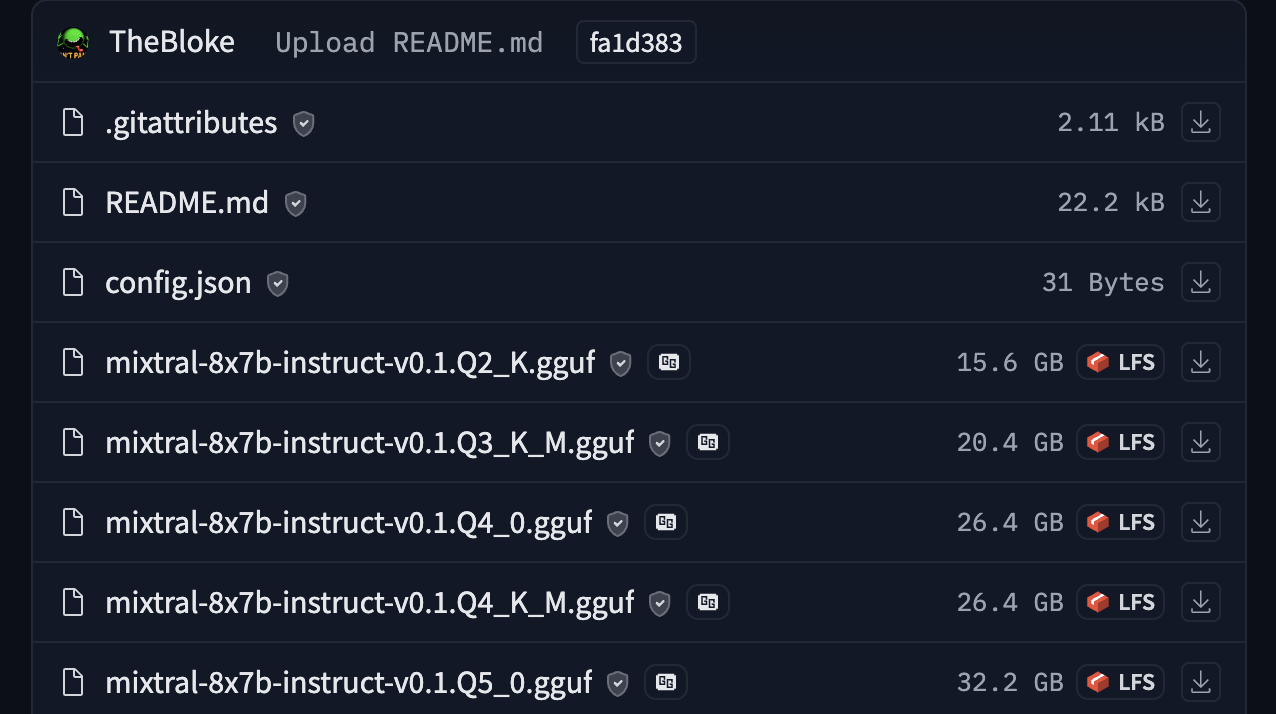

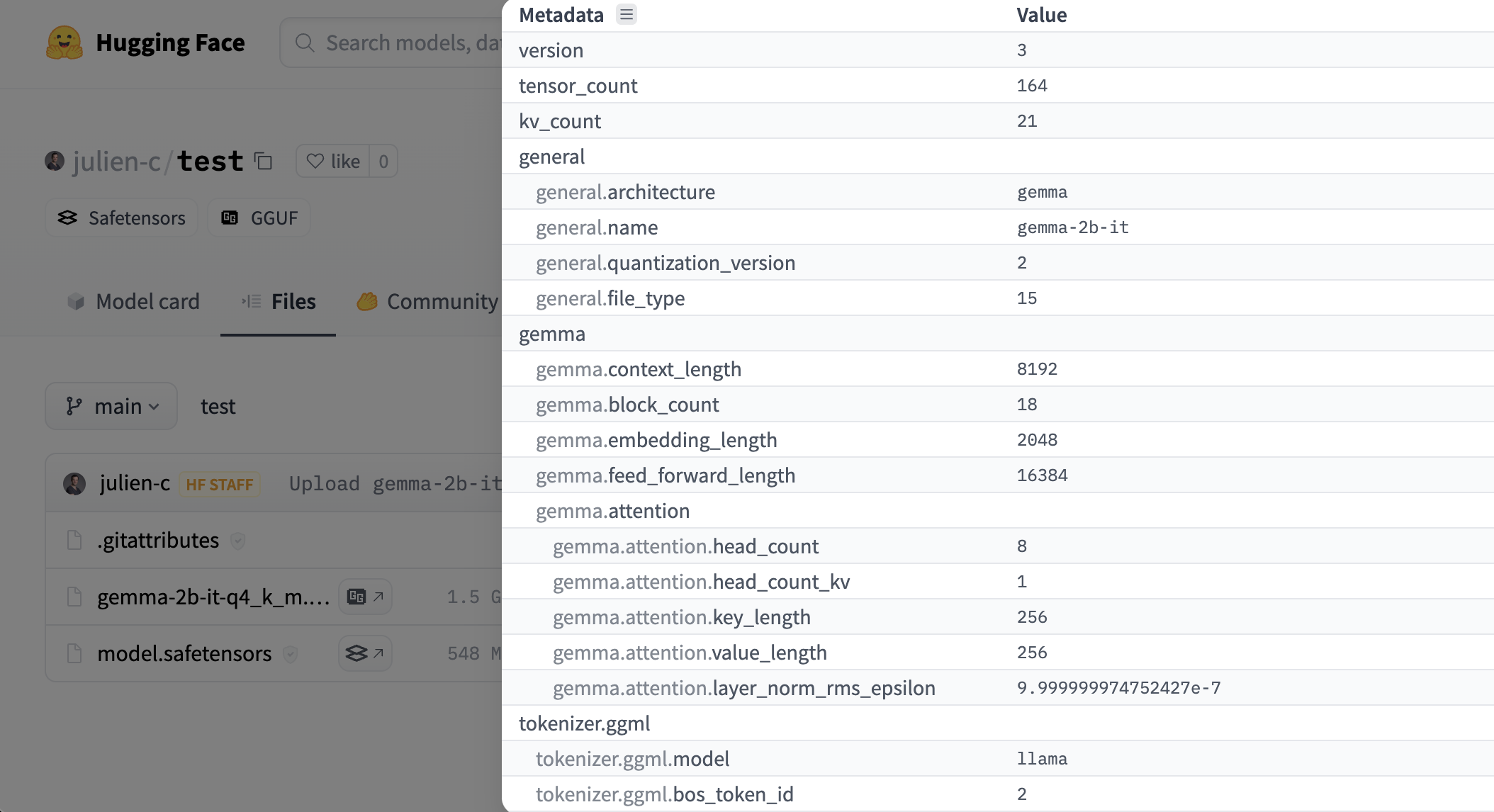

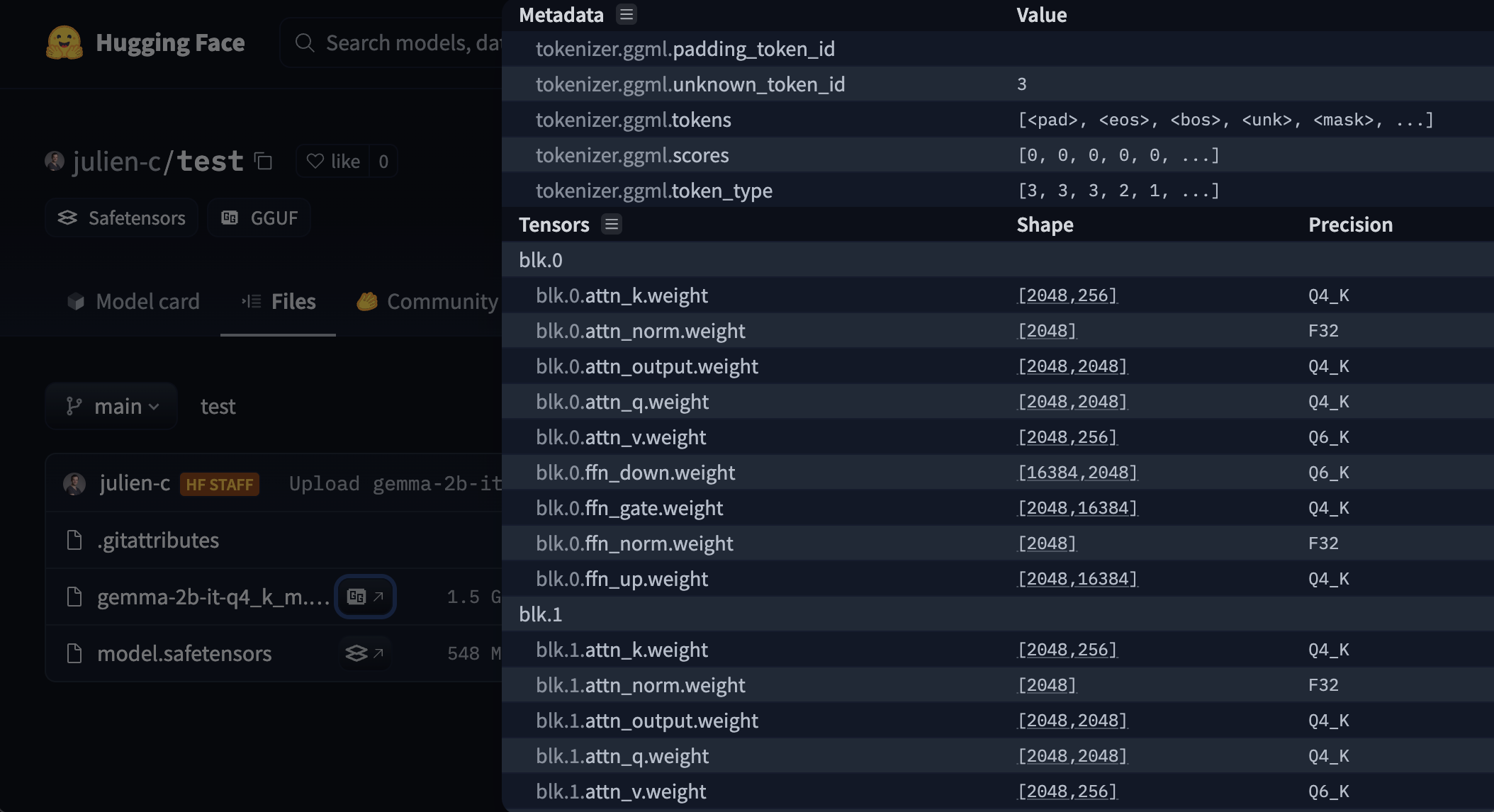

+Hugging Face Hub supports all file formats, but has built-in features for [GGUF format](https://github.com/ggerganov/ggml/blob/master/docs/gguf.md), a binary format that is optimized for quick loading and saving of models, making it highly efficient for inference purposes. GGUF is designed for use with GGML and other executors. GGUF was developed by [@ggerganov](https://huggingface.co/ggerganov) who is also the developer of [llama.cpp](https://github.com/ggerganov/llama.cpp), a popular C/C++ LLM inference framework. Models initially developed in frameworks like PyTorch can be converted to GGUF format for use with those engines.

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+